The future is APIs

LLMs don't want pixels, they want well-defined tools.

Handwritten @ The Blue Bottle in Harvard Square, Cambridge MA.

From SOAP, to REST, to gRPC, to MCP — these interface design languages are all but certain to have the spotlight in an agentic world. Thank god... (except for maybe SOAP). Because I hope that AI is the accelerator the world needed to start caring more about its API designs, since they will determine how effective products are in an AI-rich world.

The user interface

Assuming you have a beating heart, my welcomed reader, you’re using an interface right now. Users interact with interfaces all the time. And we’ve all had to use a bad user interface at some point in our lives. Government websites are the easiest to highlight as purveyors of a “whatever, it works” mentality of interface design. Or NetSuite and ServiceNow. They’re on the same footing as government websites. Good or bad, interfaces are everywhere, illuminating our faces as we struggle to book a DMV appointment or watch a YouTube video on making sourdough starter.

And a poor interface design slows down the humans it was “designed” for. When a button isn’t obvious, or doesn’t do what you thought it did — that’s bad design. When the number of clicks to modify my profile is north of 20, that’s bad design. When user interface isn’t part of a business’s core competency, their likelihood of being replaced increases. It’s happening faster than ever now.

Because it’s not a coincidence that the fastest growing startups in the world have designs that are stunning when compared to the competition. Rippling vs ADP. Linear vs Atlassian. Mercury vs SVB. FireHydrant vs PagerDuty. Take any business from the Cloud100 list and compare it to its calcified competitors and you’ll see what I mean.

The programmatic interface

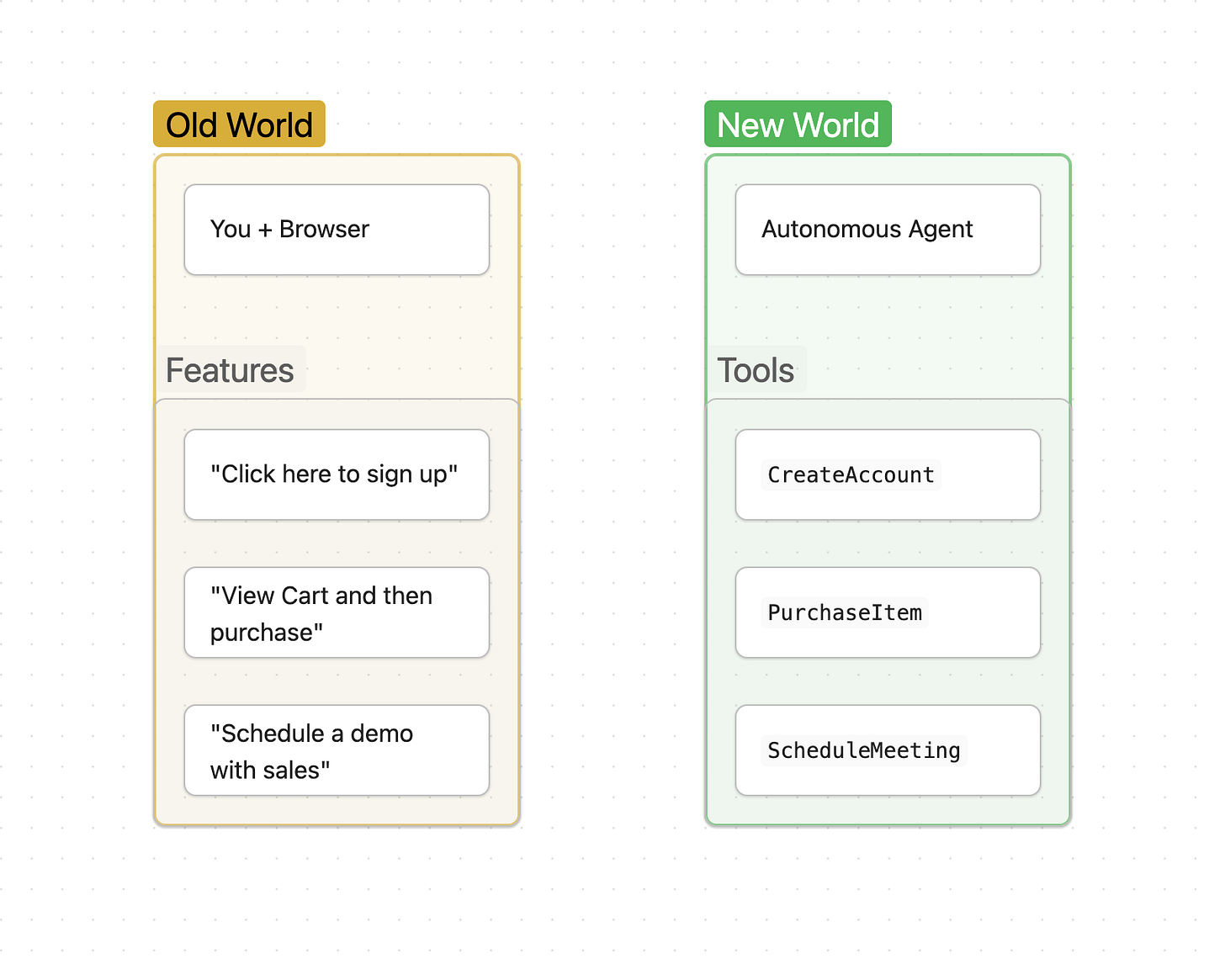

The interfaces that we see on our brightly-lit LEDs are not what AIs see1. They see “tools” when an agent is tasked with an objective. A tool, like a hammer, is rarely multi-purposed (nor should it be). The types of tools you can provide an LLM are limitless, too. You could provide a tool called launch_rocket to an LLM and it will gleefully “use” it when it decides it is a good time to do so. It doesn’t know if a rocket actually launched.

This is a feature, not a bug, of LLMs with tool calling capabilities.

LLMs also don’t give a single shit about the implementation details of the tools given to them. They only know the tool’s name, when to use it, and what parameters they must provide.

And the more intuitive the tool is, the better the large language model can use it. If you want to enable an AI agent to update a user’s name, you need to think about the same design principles a user-interface designer thinks about. The difference between v1ModifyUserProfileSave and update_user_profile_name is the equivalent of 1 click versus 10 clicks to get the same outcome.

The book Don’t Make Me Think teaches common-sense web design, but a new book title could be “Don’t Make the LLM Think” for developers designing their APIs. Because the end user of an API is shifting away from humans building software — it’s an LLM.

The A of API could have a second meaning

I know many of you reading this will eye-roll. I don’t care. But the “Application” of API might soon shift to “Agent” — because agents are going to become the primary consumers of APIs, not applications we write ourselves to integrate with said interfaces. Agentic Programming Interface might not sound so silly in a few years.

Because at the end of the day, an API is no different than a tool. They have a name, purpose, and parameter definition — the same structure as a tool given to an LLM.

But… what if the API sucks?

(I hope) AI will accelerate API development

AI features can only transcend into “agents” when a generative model can perform actions on behalf of a user. An autonomous agent requires intelligence + outcomes. Tools are what give an LLM the limbs it needs to perform actions that deliver those outcomes.

Up until now, a shitload of software functionality was built with a screen in mind. I’d estimate 20% (or more) of the software we have built was to display and give access to functionality in an intuitive way for humans. Be it an iPhone screen, laptop, or television, the interface through which we predominantly consume and use software is a screen and a keyboard.

But that mode of using software is being shaken to its core. Because when AIs are doing the work, they don’t look at a screen. They look at the tools they have, paired with the context given, and get to work. They are headless. OpenAI and Anthropic aren’t much more than chat interfaces to the humans using them.

The only logical conclusion in this new world is that APIs will be the thing software engineers begin to design and care more about. MCPs are the first example of that. Businesses almost overnight had to deploy well-defined APIs for LLMs to use. No buttons, no CSS, no HTML. Pure APIs.

My bold prediction

Before the internet, we had applications on disks whose contents we installed onto our machines. Then the browser came along and unlocked a new world of software and how we use it. Businesses shifted from writing software for macOS and Windows to writing it for browsers to display and make their content usable. Browsers also made their software available to an enormous audience.

Anthropic, OpenAI, and many others represent a shift in how people consume software. And businesses need to decide once again if they want to continue building the layer people use to access their software (the frontend, browser-based stack), or something an AI can use.

This is a predicament for existing SaaS businesses of all scales. A huge part of their R&D is allocated to browsers, and shifting the entire business to build only LLM-accessible APIs is a large and (likely) premature bet. For now.

If you aren’t reading up on protocols like MCP, A2A, ACP, or tools like Envoy AI Gateway, Stainless, Speakeasy, E2B, and Temporal. 2

You either need to become an API company, built for usage by LLMs and agents, or you will die.

AI models can understand images, but not in a way that makes them very usable for clicking around a user interfaces effectively.

These are the tech & tools I’m excited about right now, and is not exhaustive.